Cold email is not dead. Most teams underperform because they are missing an email message fit. They keep tweaking subject lines, adding AI personalization, or increasing volume, but reply rates do not improve because the core alignment is off. The offer is not compelling enough for that segment, the message does not align with the buyer’s priorities, or the timing is off. That is why even well-written campaigns fail. A reliable cold email framework fixes this by turning outbound into a structured testing process. You validate offer-message combinations first, then tighten targeting, then re-evaluate based on response quality, and finally use feedback to sharpen positioning. This phased approach protects your TAM, accelerates learning, and creates a repeatable path to meetings rather than random wins. This guide breaks down the exact 4-phase system we use to help teams book consistent meetings with cold email.

Why Cold Email Fails Without an Email Message Fit

If you are asking why cold email doesn’t work, the answer is usually not volume, tooling, or copy length. The real problem is a lack of email message fit. Teams send campaigns before validating the offer, segment, and timing. When those three are misaligned, even strong copy will produce weak cold email reply rates.

Here is what typically goes wrong.

Guessing offers instead of testing demand

Most outbound campaigns start with an internal assumption: “This should be valuable.” That is not the same as market proof. If the offer is unclear, low urgency, or too broad, prospects ignore it. Teams then blame the channel when the actual issue is offering relevance.

Running random A/B tests with no strategy

Many teams test subject lines, CTAs, and intros without first locking the core message. That creates noise, not learning. If the offer and audience are wrong, small copy tests won’t improve performance. You end up with an activity that looks scientific but produces no useful insight.

Burning TAM before learning what works

Every low-fit campaign takes a share of your market. When you send weak messaging to a large list, you do not just get poor results. You also reduce the potential for future responses from the same accounts. This is how teams burn through TAM early and then assume outbound is saturated.

Scaling before learning

Scaling a campaign before you have positive reply signals is one of the fastest ways to waste time and budget. More volume only amplifies what is already true. If the message is not landing, sending more emails increases failure, not meetings.

Strong cold email performance comes from sequencing your learning correctly. Validate the offer and message first. Then improve targeting. Then scale. That is how you improve reply quality and protect your market.

What Is Email Message Fit? (And Why It Matters)

Email message fit is the point at which your cold email consistently gets positive replies from the right prospects because your offer, audience, and timing are aligned.

In simple terms, it means you are saying the right thing to the right person at the right moment.

This is closely related to message market fit cold email, but it is not exactly the same.

- Message-market fit is broader. It refers to how well your positioning and messaging resonate with a market overall.

- Email message fit is narrower and more execution-focused. It refers to how that messaging performs in cold outbound, with real prospects, in real inboxes.

Why this matters: email message fit comes before scale. If you scale before finding it, you do not get more meetings. You just send more low-performing emails, burn through TAM, and make it harder to learn what actually works.

Before scaling volume, your outreach should show alignment across four variables:

- Right offer: The offer is relevant, clear, and worth a reply.

- Right ICP: You are targeting the segment most likely to care now.

- Right trigger: There is a real reason this prospect should pay attention right now.

- Right timing: Your message matches current priorities, not just generic pain points.

When these four align, reply rates and conversation quality improve, and cold email becomes a repeatable channel rather than a guessing game.

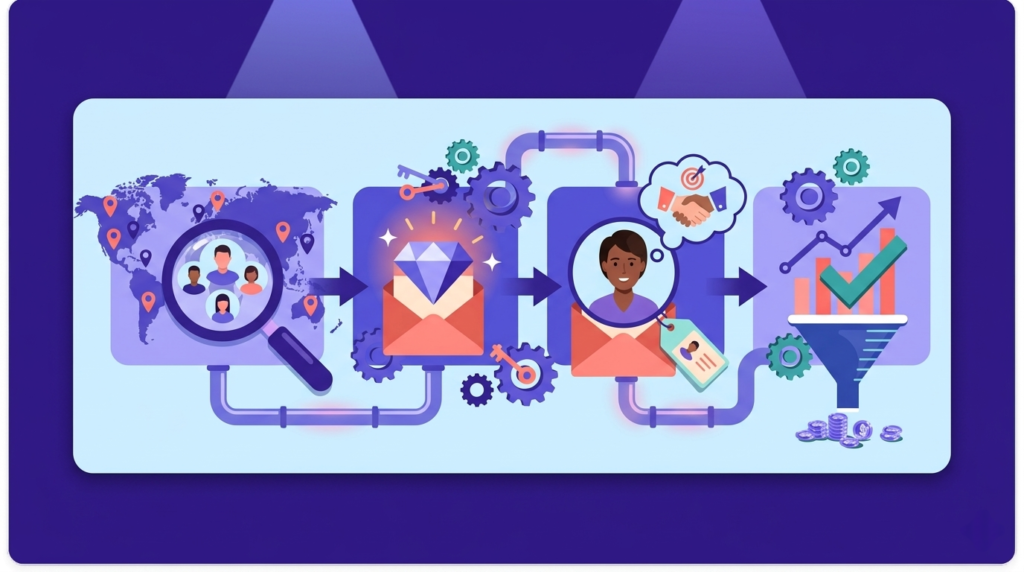

The 4-Phase Email Message Fit Framework (Overview)

A strong cold email strategy is not built by scaling volume first. It is built by running structured tests, tightening targeting, and using real market feedback to improve the offer and message over time. This outbound email framework is designed to help teams find email message fit before they scale. In most cases, the full process takes about 6 to 8 weeks, depending on TAM size, send volume, and how quickly you can identify clear reply patterns. The goal is not just to increase activity. The goal is to create a repeatable system that consistently produces qualified conversations.

Phase Summary

Phase 1: Offer & message testing

Test multiple offers and message angles across a small portion of TAM to identify what gets positive replies and real engagement.

Phase 2: Sniper targeting

Use the strongest Phase 1 signals to narrow your audience and focus on high-intent segments, roles, and triggers.

Phase 3: Re-evaluation

If reply quality is weak, pause scale and reassess the offer, ICP, timing, and campaign complexity before sending more volume.

Phase 4: Market feedback

Run feedback-focused campaigns to learn how the market sees your offer, then use those insights to improve messaging and restart testing with stronger assumptions.

Phase 1 – Offer and Message Testing (Cold Email Experiments)

Phase 1 is where you build evidence. The goal is not to find a clever subject line. The goal is to identify which offer and message angle can generate positive replies from a defined segment. In practice, this is cold email testing focused on learning what the market values, not just what gets opens.

Treat this phase as email offer testing first and copy testing second.

Goal of Phase 1

Your objective is to answer a few core questions quickly:

- Which offer gets the strongest positive reply signal?

- Which pain framing creates real conversation, not polite responses?

- Which ICP segments respond at a higher rate?

- Which message angle is repeatable across similar accounts?

You are looking for patterns, not one-off wins.

How Much of TAM to Use

Use a small portion of your TAM in this phase to learn without burning the market too early.

A practical range is:

- 5 to 15 percent of TAM for initial testing

- Start smaller if your TAM is limited or high-value

- Expand only after you see consistent positive reply quality

This gives you enough volume to compare campaigns while protecting future opportunities.

Why Multiple Campaigns Matter

Most teams run one sequence, see weak results, and conclude that cold email is not working. That is a mistake. A campaign tests only one assumption set.

Running multiple campaigns matters because it helps you separate:

- a weak offer from a weak message

- a bad ICP slice from a bad channel

- poor timing from poor execution

It also improves the speed of learning. Instead of waiting weeks to diagnose a single campaign, you compare outcomes across different angles and identify what actually drives reply quality.

The key is controlled variation. Change the offer or angle with intent, then track which combinations produce meaningful responses.

Campaign Types to Test

Use a mix of campaign styles to test different forms of relevance. Keep these brief and structured. You are testing hypotheses, not trying to impress people with complexity.

Standard sequence

Your baseline campaign. Clear pain, clear offer, clear CTA. This becomes the control against which other experiments are measured.

Creative idea

A non-standard angle that reframes the problem, challenges a common assumption, or introduces a sharper point of view. Useful for standing out in crowded markets.

Lookalike accounts

Campaigns targeting companies that resemble current customers in size, industry, motion, or team structure. This tests whether your existing wins are transferable.

Lead magnet

Offer something useful with immediate value, such as a benchmark, teardown, checklist, or audit. Good for reducing friction and surfacing interest when the direct sales ask is too early.

Loom video

A short personalized video for higher-value accounts or complex offers. This tests whether added context improves response quality enough to justify the effort.

Case study variant

Message built around a relevant customer outcome or transformation. Best when you have strong proof and want to test whether social proof improves trust and response rate.

Phase 1 works when you stay disciplined. Test enough campaigns to learn, use a limited share of TAM, and judge success by positive reply quality, not just activity.

Phase 2 – Sniper Targeting for High-Intent Leads

Phase 2 is where you stop testing broadly and start applying what worked in Phase 1. You already have early winners, so you now know which offer-message combinations can elicit positive replies. The next step is to improve cold email targeting so those winning messages reach prospects who are more likely to care right now.

This is where outbound lead segmentation becomes the main driver of results.

Use Phase 1 Winners to Build Better Targeting

Start with what actually performed, not what sounds logical internally.

Look at your Phase 1 data and ask:

- Which roles replied most often?

- Which company types produced the best conversations?

- Which industries or subsegments showed urgency?

- Which triggers appeared in positive-reply accounts?

- Which offers worked best for which ICP slices?

Then build segmented lists around those patterns. If a message worked for one segment, do not assume it will work everywhere. Pair each winning angle with the specific account type and trigger that made it work.

That is the core of sniper targeting.

Why List Quality Beats Copy

Most teams overestimate copy and underestimate targeting. Copy matters, but it cannot create demand where none exists.

List quality usually has more impact because it controls:

- whether the prospect has the problem

- whether the problem is a current priority

- whether your offer fits their stage

- whether the timing makes a reply likely

A strong message sent to the wrong list will underperform. A clear message sent to the right list often wins even without heavy personalization.

This is why Phase 2 often improves reply quality faster than another round of copy edits. Better list inputs produce better outcomes across the entire campaign.

Sniper Targeting Signals

Use high-intent signals that indicate change, urgency, or likely relevance. These signals make outreach feel timely instead of random.

Recently funded companies

Funding events often create pressure to hire, scale systems, improve performance, or accelerate the pipeline. This can increase urgency and budget availability.

New hires in leadership roles

New executives and department leaders often review tools, vendors, and processes early in their tenure. They are more open to change than long-settled teams.

Hiring signals

Job postings can reveal active priorities, team gaps, and upcoming initiatives. A company hiring SDRs, RevOps, or security roles is signaling where attention is going.

Technographics

The tools a company already uses can indicate compatibility, maturity, and likely pain points. This helps you tailor outreach to a real operating context.

Former employees of customers

If someone previously worked at a company that used your solution category or approach, they may already understand the problem and its value more quickly.

Rapid headcount growth

Fast-growing teams usually experience process breakdowns, coordination issues, and tooling strain. Those conditions often create strong outbound opportunities.

Custom outbound triggers

Build triggers tied to your offer, such as product launches, pricing changes, market expansion, partnerships, leadership announcements, or compliance deadlines. The more specific the trigger, the stronger the relevance.

Phase 2 is where you turn early message wins into a repeatable targeting system. Use Phase 1 evidence, prioritize list quality over copy tweaks, and anchor campaigns to real signals of intent.

Phase 3 – When Cold Email Isn’t Working (Re-Evaluation Phase)

Phase 3 is the reset point. If your campaign is underperforming, the right move is not always more volume, more personalization, or more sequence steps. The right move is to slow down and re-evaluate what the market is telling you.

A practical benchmark for this phase is simple:

- If you are not getting at least 1 positive reply per 500 emails, treat it as a re-evaluation problem

That does not automatically mean cold email is dead or your team is bad at execution. It means your current combination of offer, ICP, message, timing, or deliverability is not producing enough signal to justify scaling.

This is the phase where you focus on improving cold email replies by removing complexity and retesting the fundamentals.

When to Enter Re-Evaluation Mode

If cold email not working is becoming the internal narrative, pause and check these variables before making random changes:

- Is the offer clear and relevant to this segment?

- Is the pain point urgent enough to earn a reply now?

- Is the ICP too broad or poorly segmented?

- Are you adding too much personalization without improving relevance?

- Are deliverability issues hiding real performance?

Phase 3 is not about panic. It is about diagnosis.

Why AI Personalization Can Backfire

AI personalization can look impressive in a draft and still hurt results in-market.

Common ways it backfires:

- It adds personalized details that do not connect to the offer

- It creates long intros that delay the real point

- It sounds manufactured, which reduces trust

- It increases production time without improving response quality

- It makes teams think they are being relevant when they are only being specific

Specific does not equal relevant. Mentioning a prospect’s recent post, podcast, or company update only helps if it directly ties to a meaningful reason to respond.

In this phase, over-personalization often obscures the real issue: weak positioning or poor targeting.

Simpler Campaigns Usually Win Here

When performance is weak, simpler campaigns often outperform complex ones because they force clarity.

At this stage, your goal is not to impress prospects. Your goal is to create clean signal. Simple messages make it easier to see whether the offer is resonating.

What often works better in Phase 3:

- Short emails with one clear idea

- One CTA instead of multiple asks

- Direct language instead of layered positioning

- Fewer sequence steps with clearer intent

- Plain-text formatting with minimal friction

You are trying to reduce noise so the market can give you a clear answer.

Formats to Use in Re-Evaluation

Poke-the-bear emails

These are short follow-ups that challenge silence and invite a quick response. They work because they reduce cognitive load and make it easy to reply with a yes, no, or not now. They are especially useful when your earlier emails were too long or too polished.

One-line emails

One-line emails are effective in this phase because they test pure relevance. No long context. No heavy personalization. Just a direct reason for outreach and a simple ask. If even a one-line message cannot generate interest, the issue is usually not copy length. It is fit.

What Phase 3 Should Produce

A good re-evaluation phase should give you one of three outcomes:

- A clearer winning angle worth retesting at moderate volume

- Evidence that the ICP or trigger needs to change

- Confirmation that the offer needs repositioning before more outbound

Phase 3 is how you stop guessing and start learning again. If you want to improve cold email replies, simplify the campaign, question your assumptions, and let response quality guide the next move.

Phase 4 – Feedback Campaigns to Understand Your Market

Phase 4 is where outbound becomes a research engine. Instead of pushing for meetings, you use cold email feedback campaigns to learn how the market sees the problem, your offer, and the alternatives. This is a form of outbound market research that helps you improve your strategy before you scale up volume.

When teams skip this phase, they keep guessing. When they use it well, they uncover why replies are weak, which positioning angles are unclear, and what buyers actually care about.

Why Feedback Emails Often Outperform Sales Emails

Feedback emails often get better response rates than direct sales emails because they ask for insight, not commitment.

Prospects are more likely to reply when:

- the ask is low pressure

- the message is clearly about learning

- they can share an opinion quickly

- they do not feel like they are being pushed into a call

This does not mean feedback campaigns replace selling. It means they help you collect signal when your current sales messaging is not producing enough of it.

In many cases, a short feedback ask gives you more useful market data than another sales sequence with heavier personalization.

What You Learn in a Feedback Campaign

A strong feedback campaign can reveal:

- Whether the problem you are leading with feels urgent

- How buyers describe the problem in their own words

- Which objections show up repeatedly

- What alternatives they are using today

- Whether your offer is unclear, mistimed, or positioned incorrectly

- Which segments react differently to the same message

These insights are valuable because they come from real prospects in your target market, not internal assumptions.

How Feedback Insights Loop Back Into Phase 1

Phase 4 is not the end of the framework. It feeds the next round of testing.

Use feedback insights to improve Phase 1 by:

- rewriting the offer around real buyer language

- adjusting pain framing based on actual objections

- narrowing the ICP to segments with clearer urgency

- building new message angles from repeated themes

- testing stronger triggers surfaced during feedback conversations

This creates a learning loop. Phase 1 tests assumptions. Phase 4 improves those assumptions with market evidence. Then you test again with better inputs.

When to Use Feedback Campaigns

New markets

If you are entering a new vertical, geography, or buyer segment, feedback campaigns help you learn faster before you burn TAM with direct sales messaging.

Weak results

If reply quality is low and Phase 3 re-evaluation shows no clear winner, feedback outreach can uncover what your current campaigns are missing.

Repositioning offers

If you are changing your offer, targeting a different pain point, or moving upmarket/downmarket, feedback campaigns help validate whether the new positioning makes sense to buyers.

Phase 4 is what makes this framework durable. It turns cold email from a pure acquisition channel into a market learning system. The more accurately you understand your market, the faster you can return to Phase 1 with better offers, better messaging, and better odds of consistent meetings.

Common Cold Email Mistakes That Kill Results

Most cold email mistakes are not tactical. They are sequencing mistakes. Teams do the right things in the wrong order, then assume outbound does not work. If results are weak, check for these common outbound email errors first.

Testing copy before offers

Teams often test subject lines, intros, and CTAs before validating whether the offer is actually compelling. If the offer is weak, copy improvements will not fix reply quality.

Over-personalizing with AI

AI can make emails look personalized while reducing clarity and relevance. Extra details about the prospect do not matter if the message does not connect to a real priority or trigger.

Ignoring TAM size

Sending broad campaigns too early can burn through a limited market fast. If TAM is small, every low-fit campaign has a higher opportunity cost.

Scaling too early

Increasing volume before you have consistent positive replies only amplifies poor fit. Scale should come after you validate the offer, ICP, and message.

Skipping deliverability checks

Even strong messaging fails if emails are not landing in the inbox. Poor inbox placement, domain issues, and provider-level filtering can make a good campaign look like a bad one.

The pattern is simple. Fit first, then scale. Most teams lose performance when they reverse that order.

Email Message Fit Still Fails Without Deliverability

You can have a strong email-message fit and still get weak results if your emails are not reaching the inbox.

This is where cold email deliverability becomes non-negotiable. Reply rates do not only reflect message quality. They also reflect whether your campaigns are actually being seen. If inbox placement is inconsistent, your team may misdiagnose a deliverability problem as a messaging problem and start changing the wrong variables.

A few issues commonly hide behind “bad performance”:

- Provider-level performance differences (Gmail, Outlook, Microsoft 365, and other providers do not behave the same)

- Spam filtering that blocks or buries messages even when your copy is solid

- Silent inbox issues, such as throttling, poor domain reputation, or placement drops that do not show up clearly in basic sending tools

This is why message testing and deliverability checks have to run together. Otherwise, you can burn TAM, discard good offers, and over-edit campaigns that were never getting fair visibility.

If you want to go deeper, read the full guide here: Cold email deliverability guide

Final Thoughts on Email Message Fit

Most teams struggle with cold email because they treat it like a throughput problem. They send more, test small copy changes, and hope volume will create momentum. In practice, sustainable results come from a better sequence of decisions. You need a clear offer, a defined audience, strong timing, and messaging that reflects what the market actually cares about.

That is why the strongest cold email best practices are built around learning rather than activity. A reliable outbound email strategy is not about squeezing more sends out of a weak campaign. It is about finding an email message fit, validating it with real replies, and then scaling with confidence.

If there is one takeaway from this framework, it is this:

- This is a system (not a hack)

- Learning beats volume

- Fit before scale

When teams follow that order, cold email becomes more predictable, more efficient, and far more likely to produce consistent meetings.